Continuing on the post series on Unified APIs and Federated Gateways:

Azure API Management / AWS API Gateway

In the Unified API section above I wrote that we would need some feature to achieve this like security, discoverability, API management etc.

You can achieve quite good level of a Unified API with just Azure or AWS API management/Gateway. You can add many of the features needed through their provided functionalities. Looking at the image in the first post on the Evolution of API Architecture, I would say that with automation and proper configurations you can achieve a Gateway Aggregation Layer style of API functionality, but not sure if you can achieve a federated gateway. Although I think that with the support of other tools, it might be possible to use Azure and AWS API gateways to work with the backend services similarly.

My solutions still would need an API Gateway, and using something premade and tested like what is offered in Azure or AWS is reasonable.

Service Mesh

For the service mesh explanation, I will use existing sources and refer to them. I think I can’t explain service mesh any better:

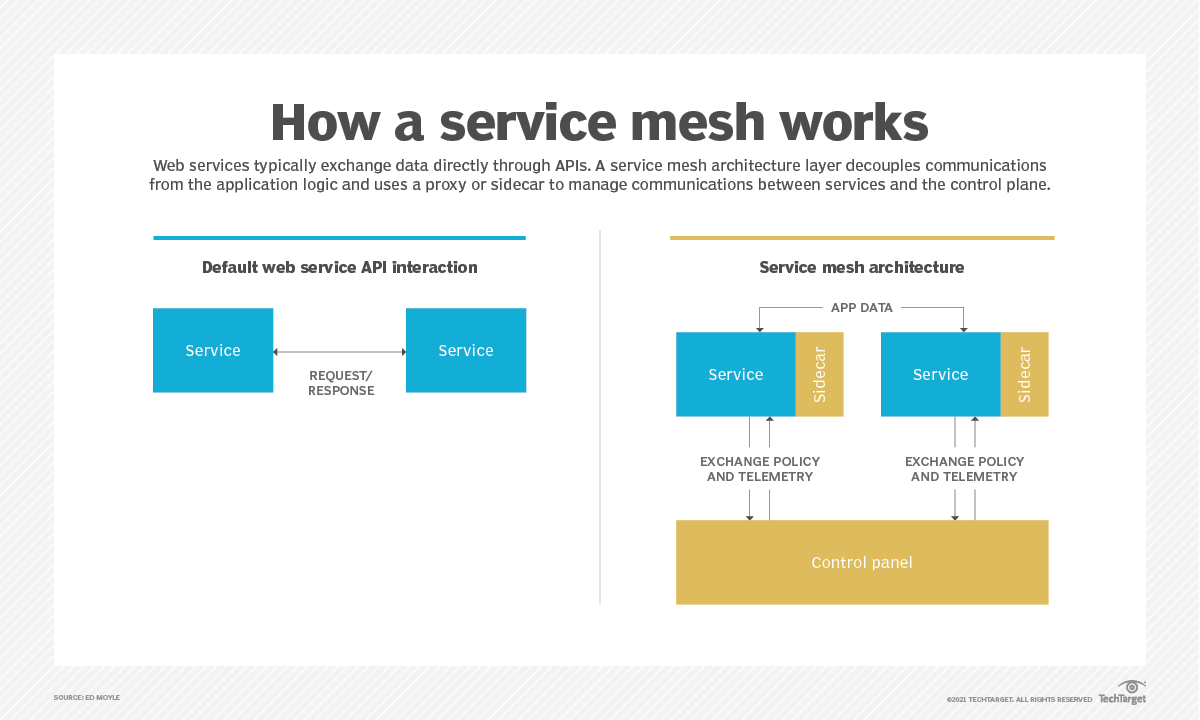

A service mesh is a configurable, low‑latency infrastructure layer designed to handle a high volume of network‑based interprocess communication among application infrastructure services using application programming interfaces (APIs). A service mesh ensures that communication among containerized and often ephemeral application infrastructure services is fast, reliable, and secure. The mesh provides critical capabilities including service discovery, load balancing, encryption, observability, traceability, authentication and authorization, and support for the circuit breaker pattern.

https://www.nginx.com/blog/what-is-a-service-mesh/

The service mesh is usually implemented by providing a proxy instance, called a sidecar, for each service instance. Sidecars handle interservice communications, monitoring, and security‑related concerns – indeed, anything that can be abstracted away from the individual services. This way, developers can handle development, support, and maintenance for the application code in the services; operations teams can maintain the service mesh and run the app.

https://www.nginx.com/blog/what-is-a-service-mesh/

Service mesh benefits and drawbacks

Some advantages of a service mesh are as follows:

Simplifies communication between services in both microservices and containers.

Easier to diagnose communication errors, because they would occur on their own infrastructure layer.

Supports security features such as encryption, authentication and authorization.

Allows for faster development, testing and deployment of an application.

Sidecars placed next to a container cluster is effective in managing network services.

https://searchitoperations.techtarget.com/definition/service-mesh

Some downsides to service mesh are as follows:

Runtime instances increase through use of a service mesh.

Each service call must first run through the sidecar proxy, which adds a step.

Service meshes do not address integration with other services or systems, and routing type or transformation mapping.

Network management complexity is abstracted and centralized, but not eliminated — someone must integrate service mesh into workflows and manage its configuration.

https://searchitoperations.techtarget.com/definition/service-mesh

Terminology

Container orchestration framework. As more and more containers are added to an application’s infrastructure, a separate tool for monitoring and managing the set of containers – a container orchestration framework – becomes essential. Kubernetes seems to have cornered this market, with even its main competitors, Docker Swarm and Mesosphere DC/OS, offering integration with Kubernetes as an alternative.

Services and instances (Kubernetes pods). An instance is a single running copy of a microservice. Sometimes the instance is a single container; in Kubernetes, an instance is made up of a small group of interdependent containers (called a pod). Clients rarely access an instance or pod directly; rather they access a service, which is a set of identical instances or pods (replicas) that is scalable and fault‑tolerant.

Sidecar proxy. A sidecar proxy runs alongside a single instance or pod. The purpose of the sidecar proxy is to route, or proxy, traffic to and from the container it runs alongside. The sidecar communicates with other sidecar proxies and is managed by the orchestration framework. Many service mesh implementations use a sidecar proxy to intercept and manage all ingress and egress traffic to the instance or pod.

Service discovery. When an instance needs to interact with a different service, it needs to find – discover – a healthy, available instance of the other service. Typically, the instance performs a DNS lookup for this purpose. The container orchestration framework keeps a list of instances that are ready to receive requests and provides the interface for DNS queries.

Load balancing. Most orchestration frameworks already provide Layer 4 (transport layer) load balancing. A service mesh implements more sophisticated Layer 7 (application layer) load balancing, with richer algorithms and more powerful traffic management. Load‑balancing parameters can be modified via API, making it possible to orchestrate blue‑green or canary deployments.

Encryption. The service mesh can encrypt and decrypt requests and responses, removing that burden from each of the services. The service mesh can also improve performance by prioritizing the reuse of existing, persistent connections, which reduces the need for the computationally expensive creation of new ones. The most common implementation for encrypting traffic is mutual TLS (mTLS), where a public key infrastructure (PKI) generates and distributes certificates and keys for use by the sidecar proxies.

Authentication and authorization. The service mesh can authorize and authenticate requests made from both outside and within the app, sending only validated requests to instances.

Support for the circuit breaker pattern. The service mesh can support the circuit breaker pattern, which isolates unhealthy instances, then gradually brings them back into the healthy instance pool if warranted.

https://www.nginx.com/blog/what-is-a-service-mesh/

Service Mesh Products and Services

- AWS App Mesh

- Buoyant Conduit

- F5 Nginx Service Mesh

- Google Anthos Service Mesh

- HashiCorp Consul

- Istio

- Kong Mesh

- Kuma

- Linkerd

- Red Hat OpenShift Service Mesh

- Solo.io Gloo Mesh

- Tetrate

- Tigera Calico Cloud

- Traefik Labs

- VMware Tanzu Service Mesh

- Azure: Container Instances Service Fabric managed clusters Azure Kubernetes Service (AKS)

DAPR

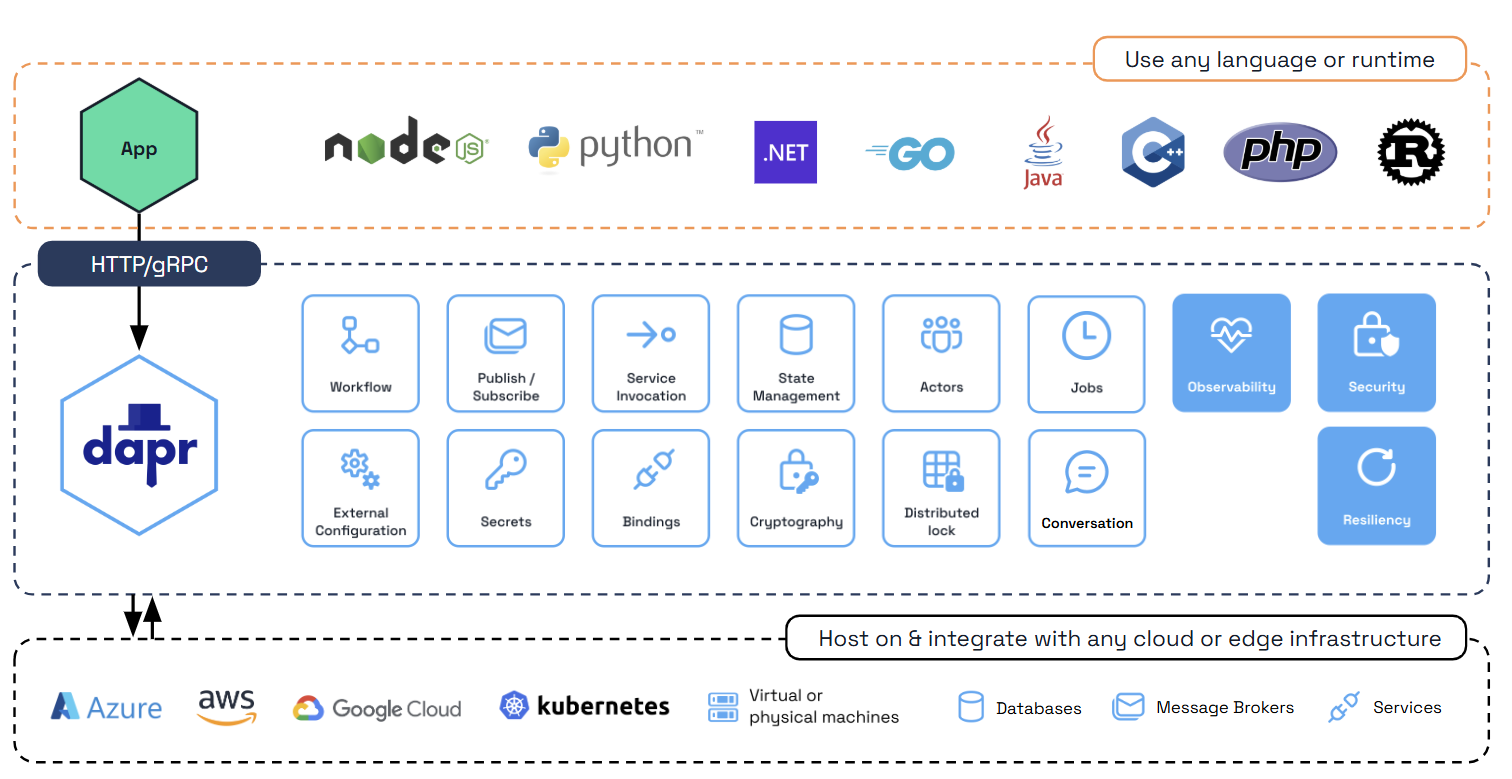

DAPR is an interesting project from a developers point of view. It looks similar to service mesh but it is different. I’ll let the DAPR documentation explain better what is does at this moment. Later I will explain how this is going to be used in my project.

Dapr is a portable, event-driven runtime that makes it easy for any developer to build resilient, stateless and stateful applications that run on the cloud and edge and embraces the diversity of languages and developer frameworks.

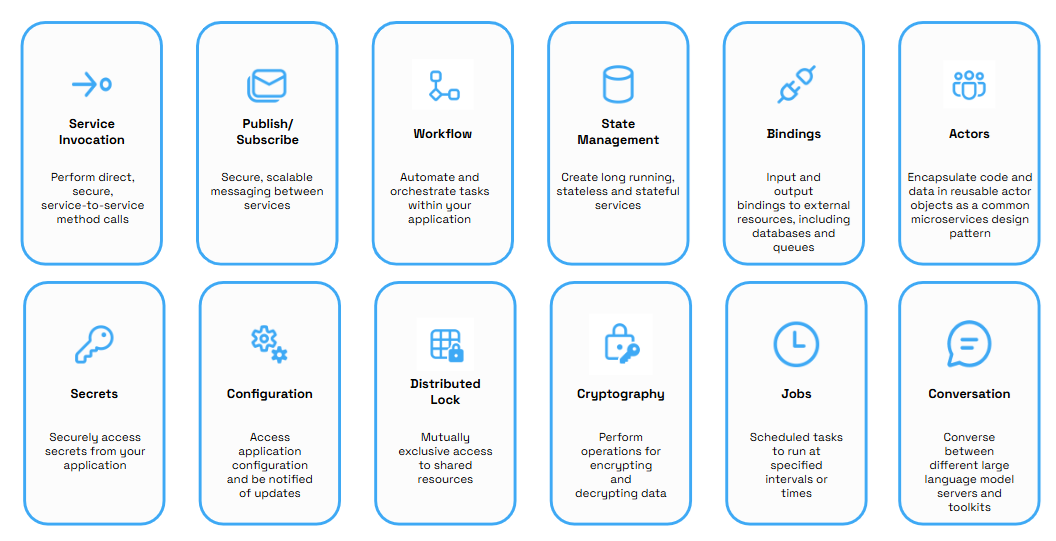

Dapr codifies the best practices for building microservice applications into open, independent APIs called building blocks, that enable you to build portable applications with the language and framework of your choice. Each building block is completely independent and you can use one, some, or all of them in your application.

Using Dapr you can incrementally migrate your existing applications to a microserivces architecture, thereby adopting cloud native patterns such scale out/in, resilency and independent deployments.

In addition, Dapr is platform agnostic, meaning you can run your applications locally, on any Kubernetes cluster, on virtual or physical machines and in other hosting environments that Dapr integrates with. This enables you to build microservice applications that can run on the cloud and edge.

https://docs.dapr.io/concepts/overview/

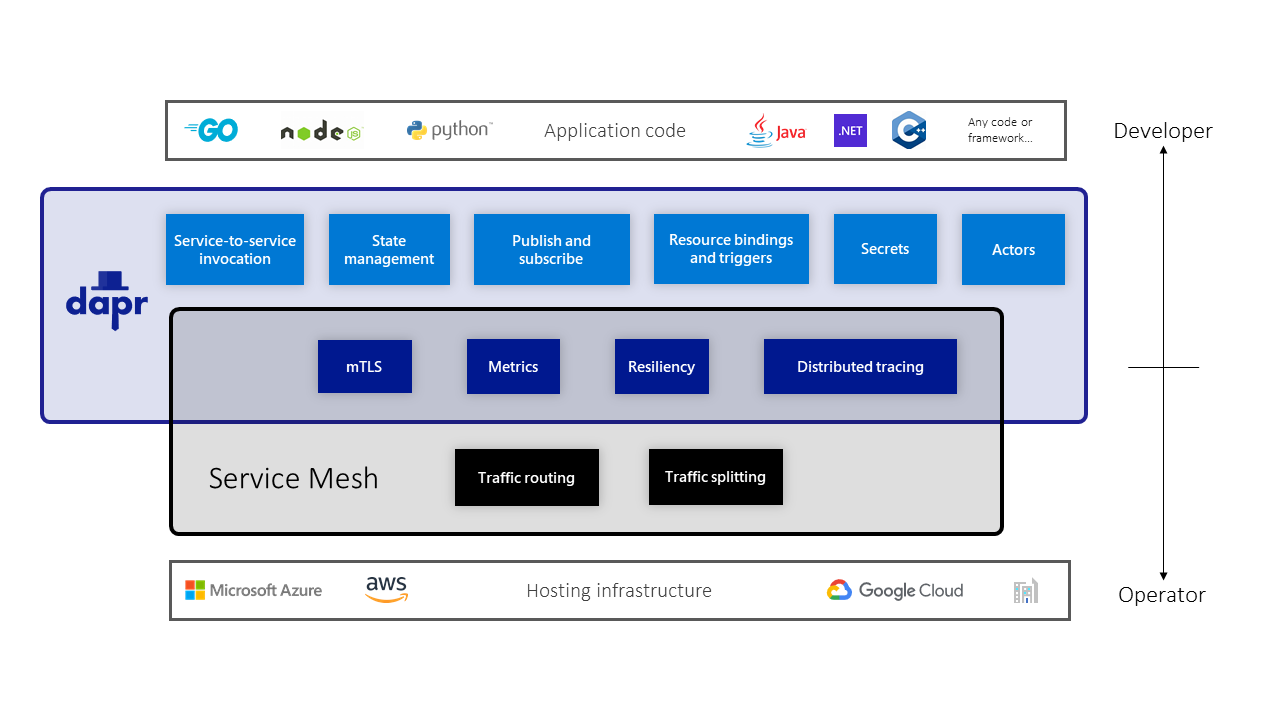

Dapr and service meshes

While Dapr and service meshes do offer some overlapping capabilities, Dapr is not a service mesh, where a service mesh is defined as a networking service mesh. Unlike a service mesh which is focused on networking concerns, Dapr is focused on providing building blocks that make it easier for developers to build applications as microservices. Dapr is developer-centric, versus service meshes which are infrastructure-centric.

In most cases, developers do not need to be aware that the application they are building will be deployed in an environment which includes a service mesh, since a service mesh intercepts network traffic. Service meshes are mostly managed and deployed by system operators, whereas Dapr building block APIs are intended to be used by developers explicitly in their code.

https://docs.dapr.io/concepts/service-mesh/

Some common capabilities that Dapr shares with service meshes include:

Secure service-to-service communication with mTLS encryption

Service-to-service metric collection

Service-to-service distributed tracing

Resiliency through retries

https://docs.dapr.io/concepts/service-mesh/

Sources for this blog post

https://www.apollographql.com/docs/

https://the-guild.dev/blog/graphql-mesh

https://netflixtechblog.com/how-netflix-scales-its-api-with-graphql-federation-part-1-ae3557c187e2

https://netflixtechblog.com/how-netflix-scales-its-api-with-graphql-federation-part-2-bbe71aaec44a

https://docs.dapr.io/concepts/service-mesh/

https://www.apollographql.com/docs/federation/

https://moonhighway.com/apollo-federation

https://graphcms.com/blog/graphql-vs-rest-apis

https://www.apollographql.com/blog/graphql/basics/graphql-vs-rest/

https://movio.co/blog/building-a-new-api-platform-for-movio/

https://www.apollographql.com/blog/graphql/basics/the-anatomy-of-a-graphql-query/

https://graphcms.com/blog/graphql-vs-rest-apis

https://itnext.io/a-guide-to-graphql-schema-federation-part-1-995b639ac035

https://itnext.io/a-guide-to-graphql-schema-federation-part-2-872a820510ff

https://www.sanity.io/guides/graphql-vs-rest-api-comparison

https://tyk.io/blog/an-introduction-to-graphql-federation/

https://hasura.io/blog/the-ultimate-guide-to-schema-stitching-in-graphql-f30178ac0072/

2 thoughts on “Unified APIs and Federated Gateways – Part 2: API Management, Service Mesh, DAPR”